AI-Powered Deployment Automation

An AI-powered agent that installs the BlueOptima integrator on any client VM — automatically, safely, and with complete audit logging. From a 3-hour manual process to a 15-minute automated one.

01 — The Problem

A manual process that couldn't scale

Installing the BlueOptima integrator on client VMs was entirely manual — requiring deep technical knowledge, step-by-step execution, and dedicated engineer time. As client volume grew, this process became the single biggest bottleneck to onboarding.

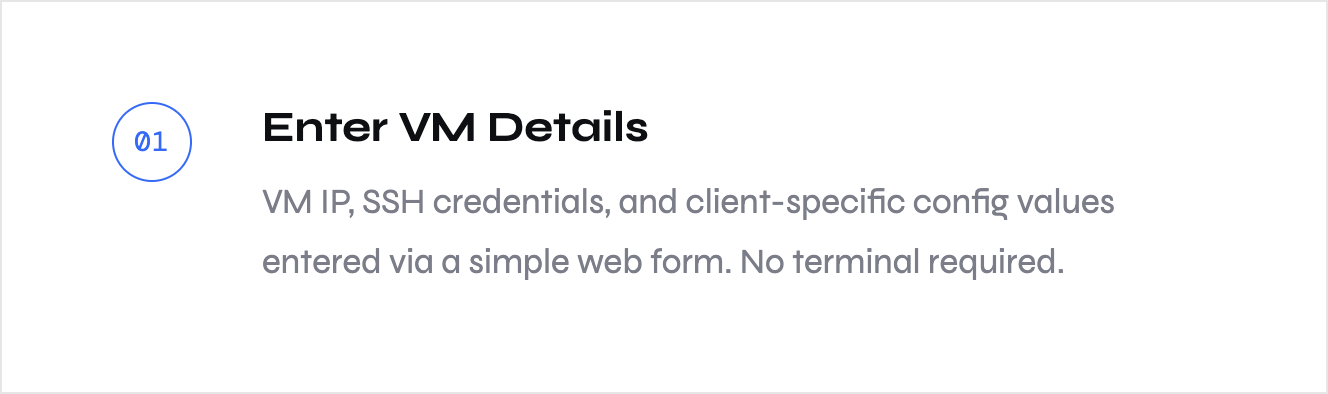

Six steps from zero to running integrator

02 — The Solution

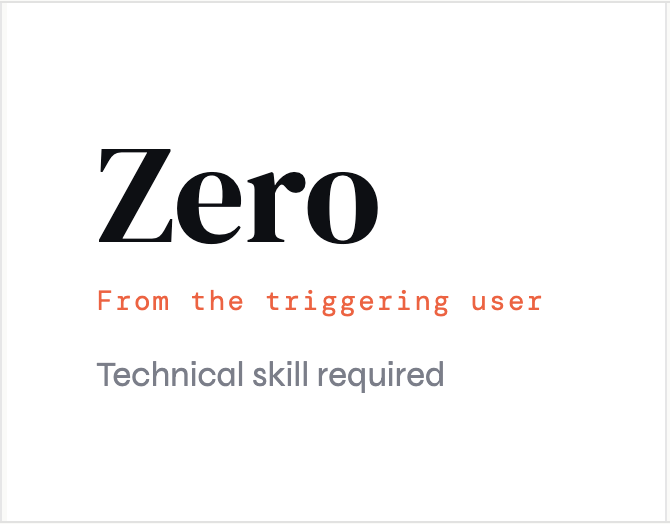

A six-step orchestrated flow designed so that any team member — with zero command-line knowledge — can deploy the BlueOptima integrator on any client VM. The agent handles the complexity; humans stay informed and in control.

Average expected duration: Under 15 minutes -No engineer on the call required - Full rollback if anything fails

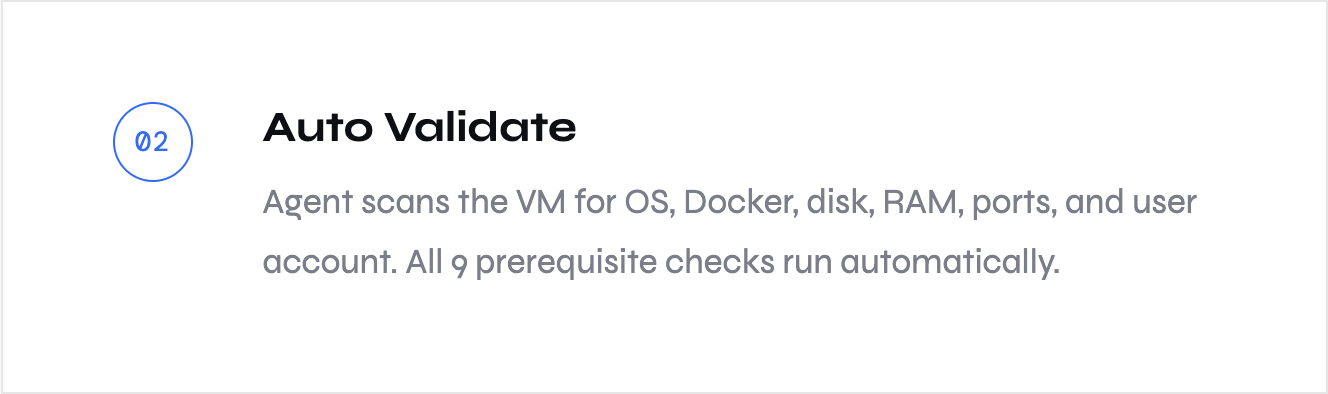

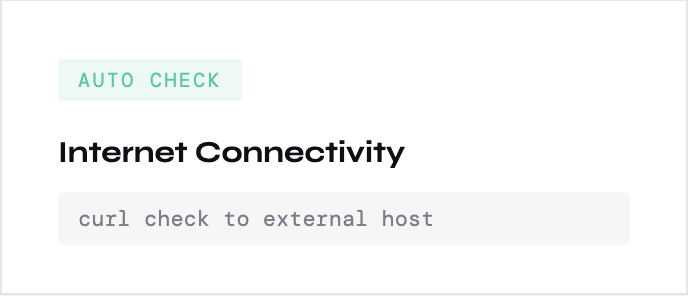

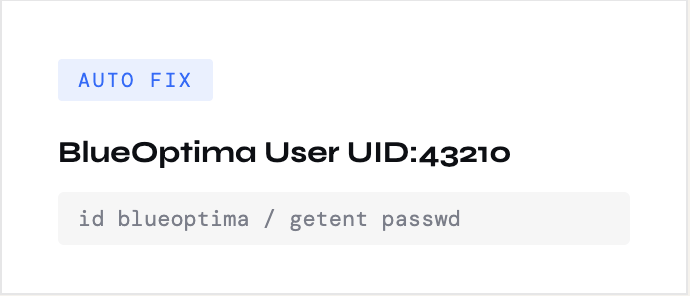

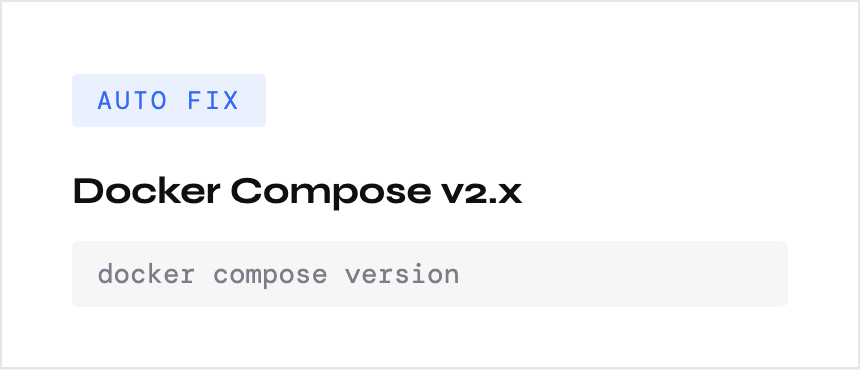

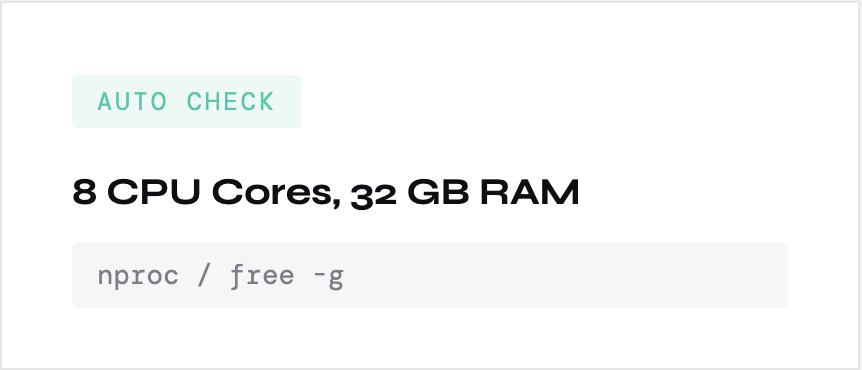

9 checks before a single command runs

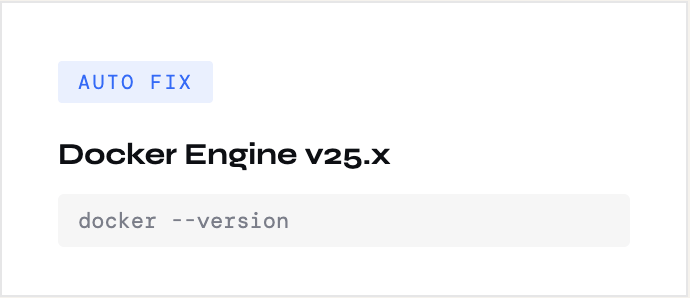

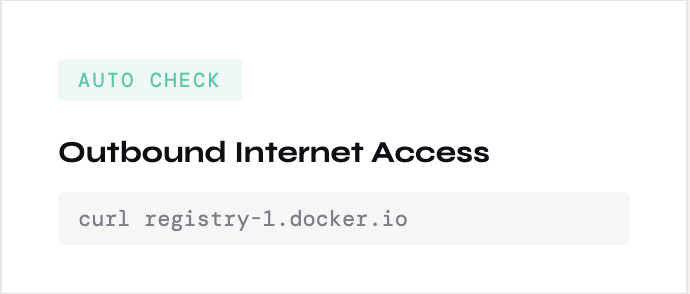

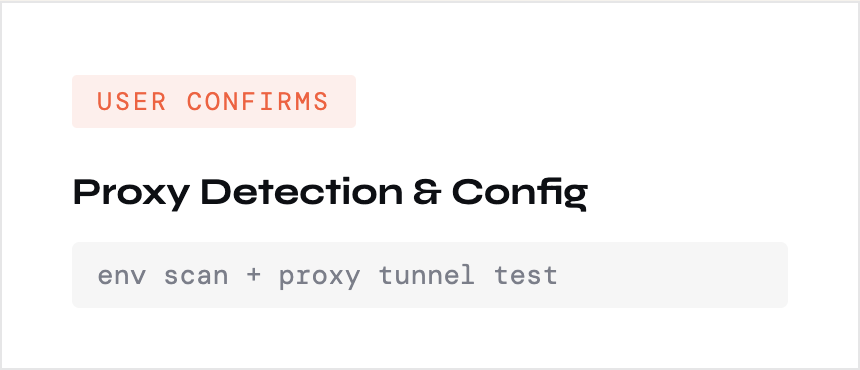

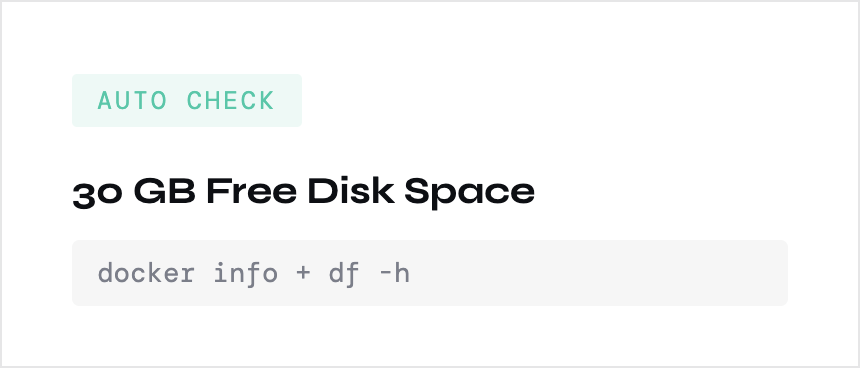

03 — Pre-flight Validation

Every BlueOptima requirement is validated automatically before the install begins. Some checks auto-fix issues; others require human confirmation — a deliberate design choice to maintain oversight without slowing things down.

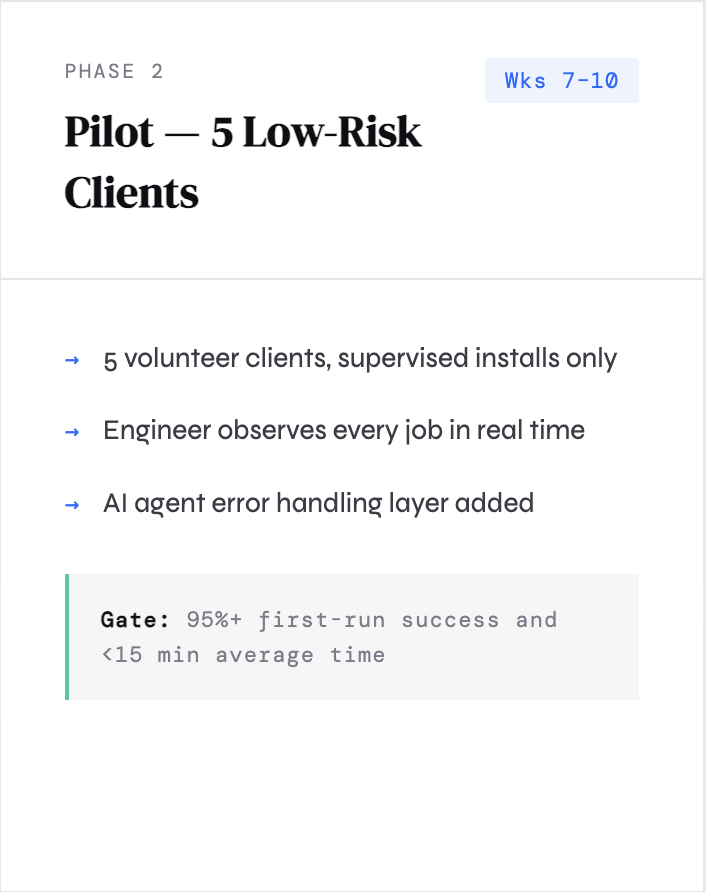

Every risk has two layers of protection

04 — Safety Architecture

The safety model was a core design constraint from day one: for every risk category, there is a prevention control to avoid the failure and a recovery control to handle it if it happens anyway.

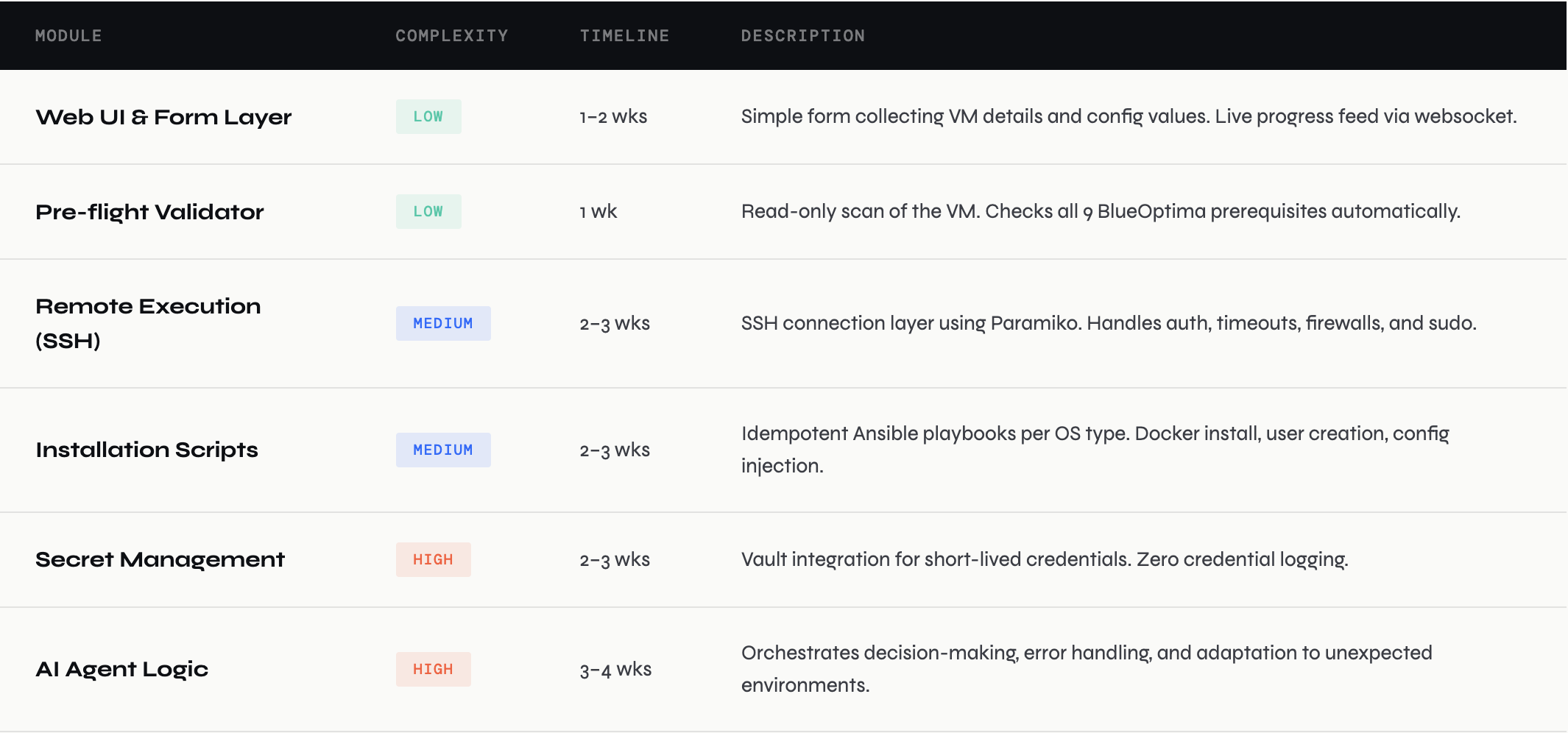

10–14 week build mapped to complexity

05 — Engineering Complexity

As lead designer I am working alongside engineering to define each module, its risk profile, and its dependencies. Sequencing safety controls before AI features is a deliberate decision to ensure a safe foundation before adding autonomous behaviour.

Estimated total: 10–14 weeks with a team of 2–3 engineers · Recommended: start safety controls before AI features

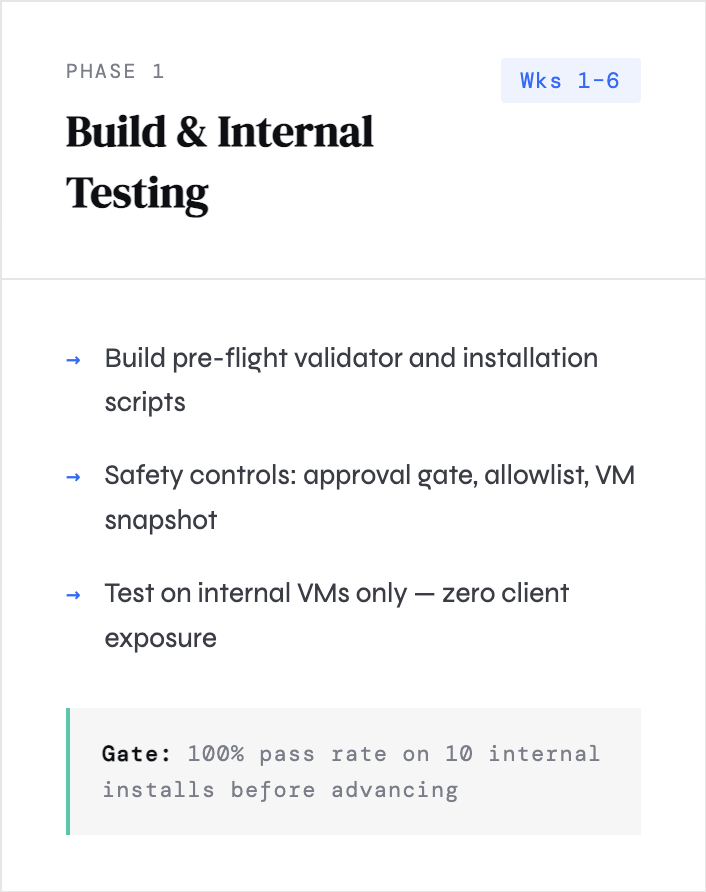

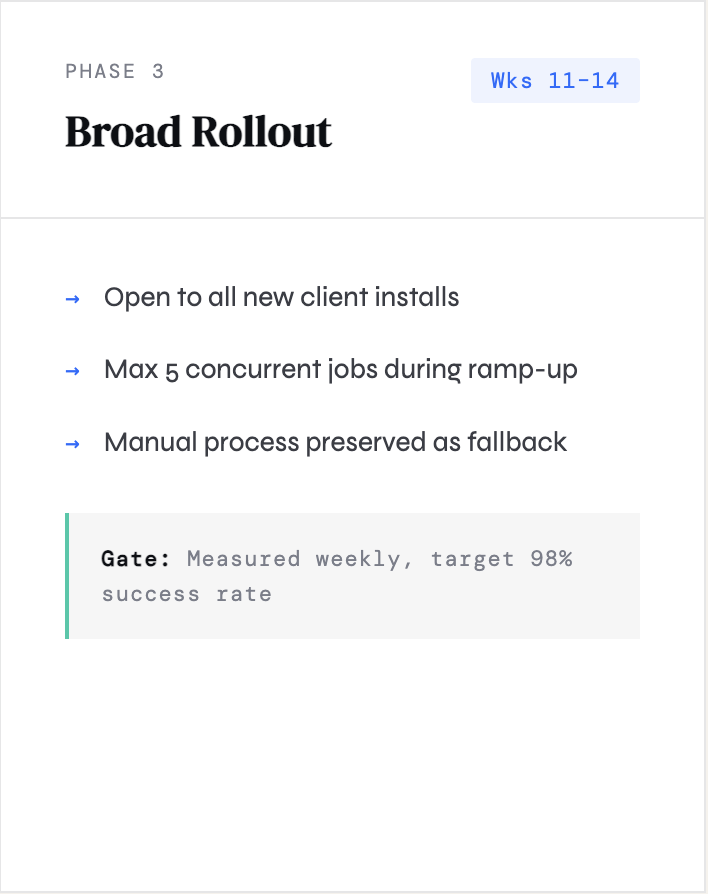

Three phases, each gated on evidence

06 — Rollout Strategy

No phase advances without measurable success criteria. This wasn't just a safety choice — it was a trust-building strategy that gives stakeholders clear checkpoints and engineers a controlled ramp.

"The AI reasons, humans decide. No action is taken on a client VM without explicit human confirmation."

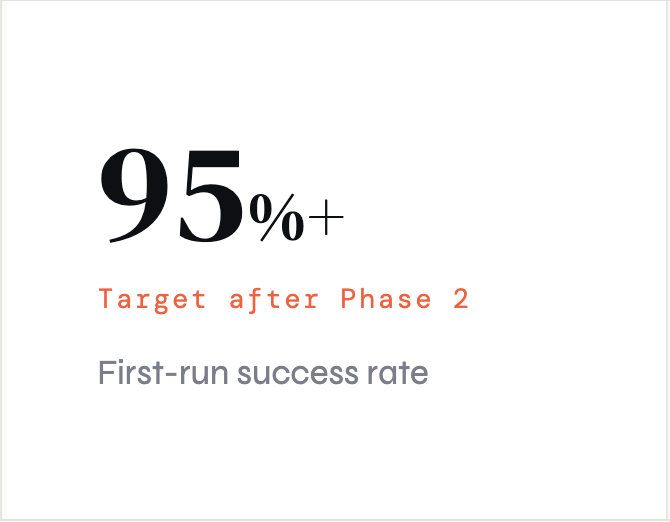

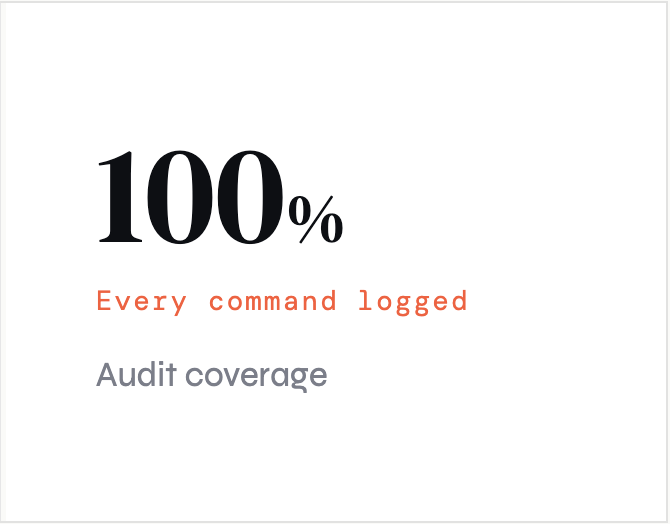

Measurable outcomes at every level

07 — Business Impact

The Integrator Agent was designed with a clear business case from the start. Every design decision maps back to one of four quantifiable outcomes.

The Integrator Agent was one of the most complex design challenges I've worked on — not because the UI was complicated, but because the hardest design problems were invisible. How do you make an AI agent feel trustworthy? How do you design human oversight into a largely automated system without killing the efficiency gains?

The answer we landed on was: make every action legible before it runs. The review-and-confirm step (Step 3) was initially cut from early prototypes for being "friction." I pushed back hard. That step is the entire trust architecture. It's where the agent becomes a collaborator rather than a black box.

I also learned that designing for engineers installing on client VMs is very different from designing for end users. Failure modes, error messages, and audit logs are first-class features — not afterthoughts. The kill switch in the UI isn't edge-case UX. It's the central promise of the product.

The phased rollout plan was also a design artefact, not just an engineering plan. It structured how we'd earn trust from stakeholders and built credibility for the AI features before they were needed.

What I learned leading this project

08 — Designer Reflection